Introduction: Visionaries

The story of AI music isn’t just about circuits, algorithms, or code – it’s about people. Over the past century, composers and inventors have imagined new ways to utilise technology, transforming the potential of music. Each generation of pioneers has challenged tradition: Luigi Russolo with his noise machines and George Antheil’s mechanical chaos. The immersive soundscapes of Edgard Varèse inspired composers such as Delia Derbyshire, who painstakingly spliced tape to create new timbres. Simultaneously, the trained architect Iannis Xenakis applied a mathematical approach to his compositions, and ultimately Brian Eno introduced us to the new realm of ambient environments.

These innovators didn’t merely adopt technology; they shaped it, bending machines to fit their artistic visions. Their experiments laid the groundwork for today’s AI composers, who carry forward the same spirit of rebellion and innovation into the digital age. This article traces their journey, illustrating how human imagination – working hand in hand with technology -has taken us from Futurist noise to neural networks.

A radio broadcast, produced for RTHK Radio 3 and now available on SoundCloud, features excerpts from five key composers – George Antheil, Edward Varèse, Delia Derbyshire, and Brian Eno – culminating in AIVA’s orchestral AI composition: Symphonic Fantasy in A minor Op 24: Among the Stars (2018)

1900–1930 Noise and Rebellion

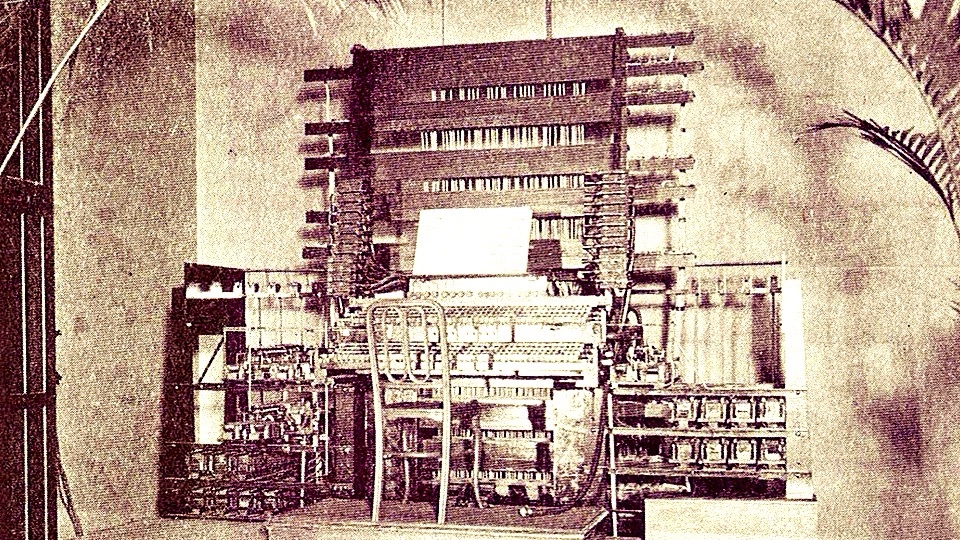

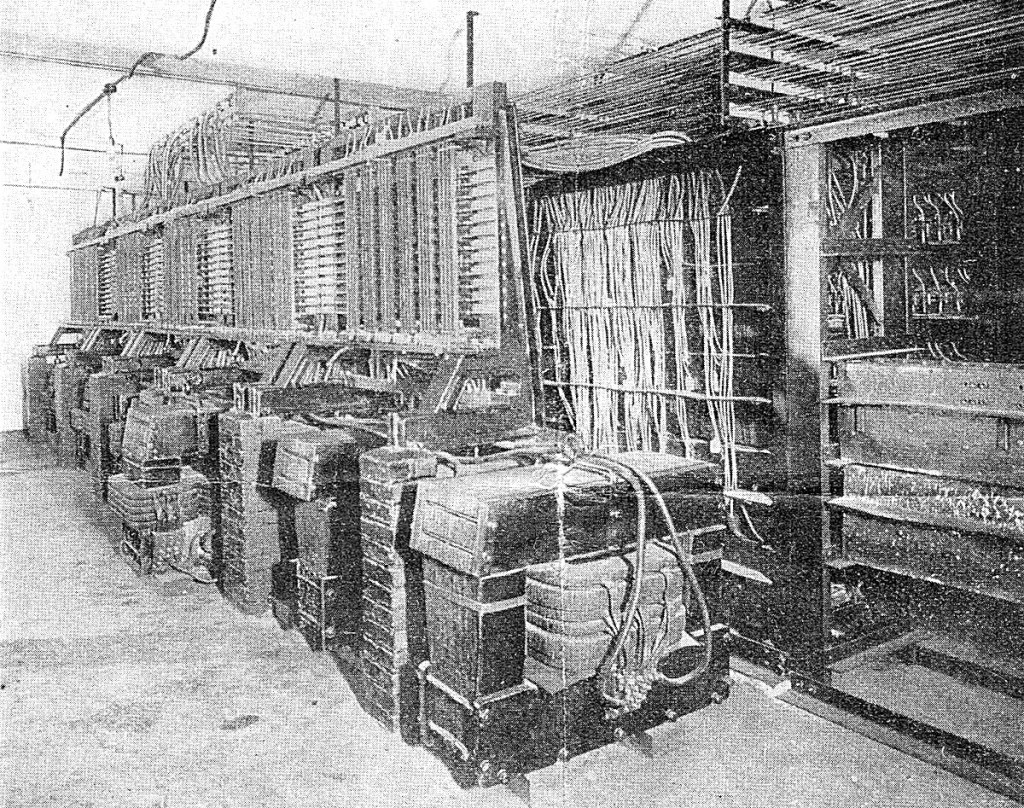

In 1906, the first public demonstrations of an electromechanical synth, Thaddeus Cahill’s Telharmonium, were held in New York at Telharmonic Hall. The Telharmonium was the first electromechanical synthesiser, weighing over 200 tons and using rotating tone wheels to generate sounds. It influenced later synths, including the Hammond organ (1930s), the RCA Mark II Synthesiser (1957), and modern digital synthesisers. The following year, in 1907, Ferruccio Busoni published Sketch for a New Aesthetic of Music – an essay calling for a radical reimagining of music that embraced technology, microtones, and electronic sounds. Busoni’s ideas predicted algorithmic composition and electronic sound-generation technologies decades before they existed.

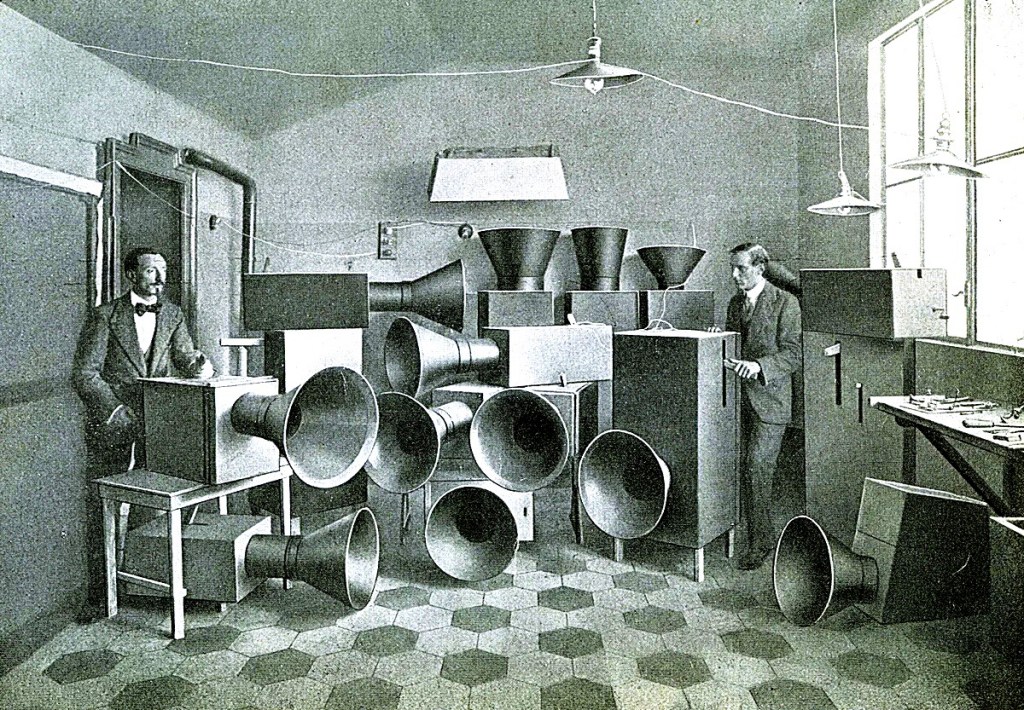

An even more radical voice emerged in 1913 when Luigi Russolo declared the orchestra obsolete. In The Art of Noises, he argued that factories, trains, and crowds create the true music of the modern age. He built intonarumori machines that produced controlled chaos. When he premiered them in Milan around 1913–1914, the audience reacted with uproar and excitement.

George Antheil soon pushed mechanisation further. Between 1923 and 1924, he composed Ballet Mécanique for 16 synchronised player pianos, sirens, propellers, and typewriters. At the 1926 Paris premiere, the machines failed to synchronise properly, and the audience erupted in laughter and jeers. Yet, Antheil’s mechanical polyrhythms foreshadowed MIDI sequencing (introduced in 1983) and algorithmic beat generation, and his radical approach inspired Conlon Nancarrow and Frank Zappa.

1930–1960 Organising Sound

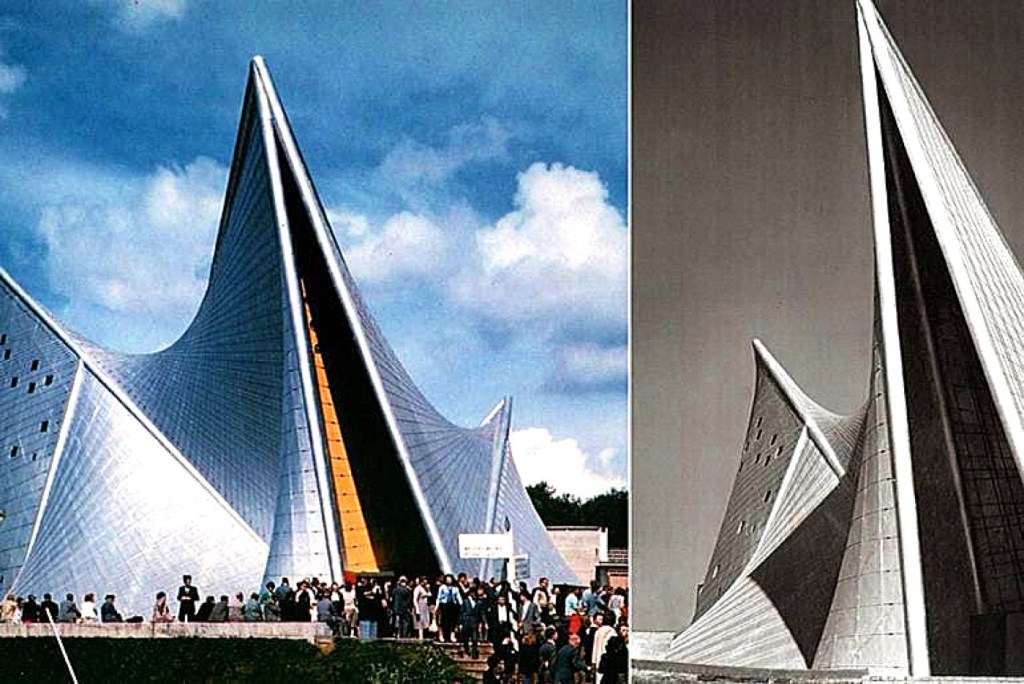

Between 1918 and 1921 (revised in 1927), Edgard Varèse composed Amériques, his significant orchestral work, blending modernist dissonance, rhythmic complexity, and ‘sound masses’. The work focused heavily on percussion (including 11 percussionists and sirens) and was among the first to elevate percussion as a dominant, independent force in the orchestra. Over the years, he transformed sound into architecture through his groundbreaking work, Poème électronique (1958). Collaborating with Le Corbusier (who designed the Philips Pavilion) and Iannis Xenakis (who served as architectural assistant), Varèse installed approximately 325 loudspeakers in the Philips Pavilion at the 1958 Brussels World’s Fair, immersing listeners in a moving soundscape.

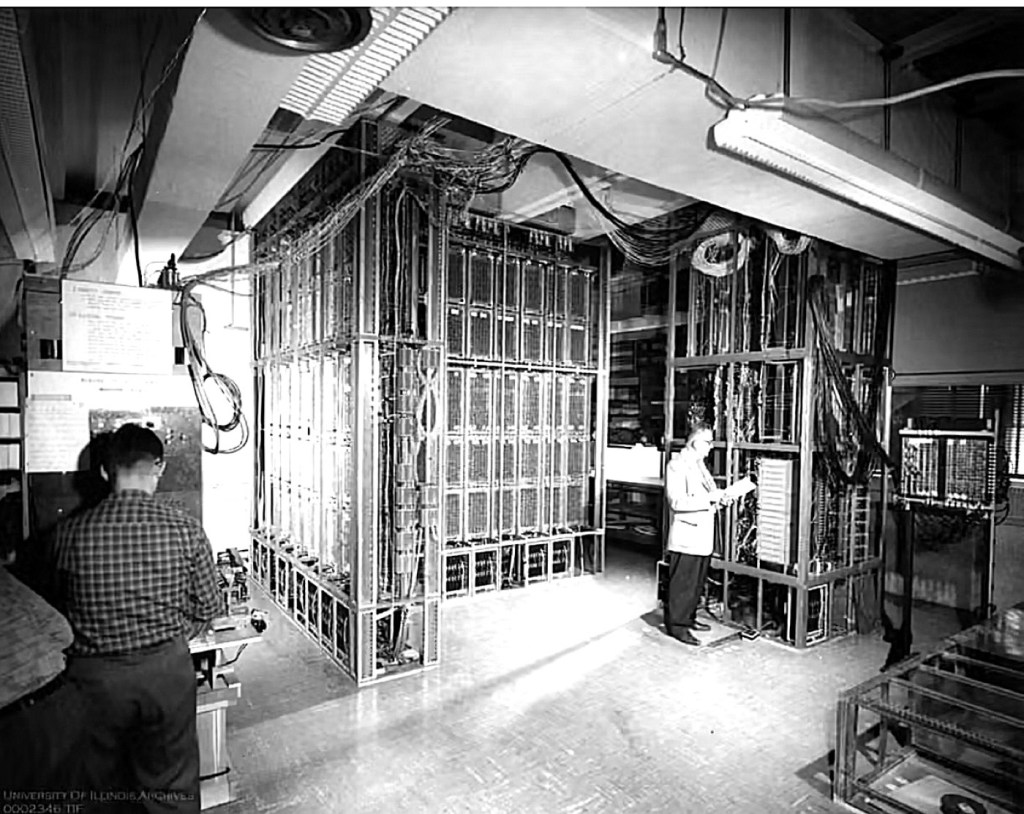

The first computer-generated melody was programmed by Christopher Strachey on the Ferranti Mark I (derived from the Manchester Mark I) in 1951, with Alan Turing’s involvement in the machine’s programming environment. The computer played God Save the King, marking the earliest documented instance of a computer creating music and the beginning of composition using digital technology.

Karlheinz Stockhausen composed Gesang der Jünglinge (Song of the Youth) in 1955–1956, an electronic/vocal fusion with tape, inspired by a Biblical story (Daniel 3:1–30, the Song of the Three Youths). It combines a boy soprano voice (12-year-old Josef Protschka) with electronically generated sounds, spliced, looped, and sped up to create otherworldly vocal textures.

Computers formally entered the compositional story in 1957, when Lejaren Hiller and Leonard Isaacson wrote the Illiac Suite (String Quartet No. 4), the first computer-generated score, by applying Bach-style counterpoint rules through algorithmic decision-making. That same year, Max Mathews at Bell Labs created MUSIC I, the first digital sound synthesis program. Together, Varèse’s spatial vision and Hiller’s algorithms shifted music from notation to machine-sculpted sound.

1960-1980 Tape, Chance, and Sci‑Fi Sound

Delia Derbyshire at the BBC Radiophonic Workshop realised Ron Grainer’s Doctor Who theme in 1963. She spliced tape loops, layered oscillators, and crafted each note by hand. The BBC refused to credit her on-screen (until the 50th anniversary in 2013), but her theme became one of the first fully electronic TV signatures and influenced generations of electronic composers.

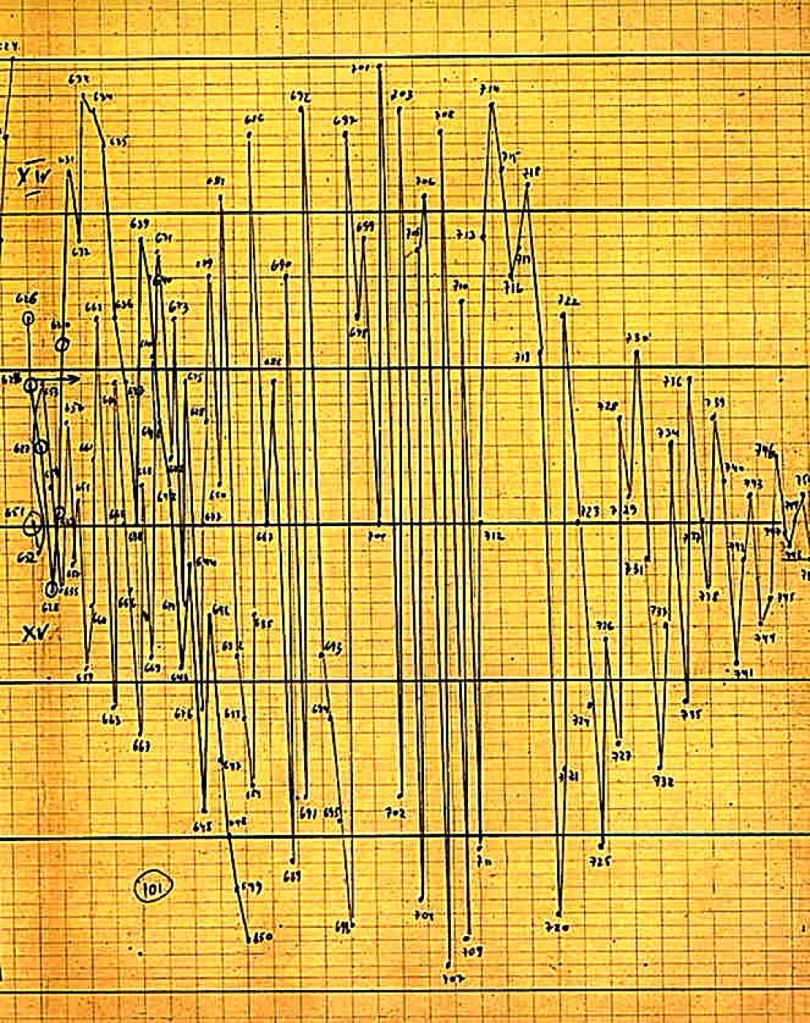

At the same time, Iannis Xenakis used mathematics in his compositions. In Achorripsis (1957), he applied probability theory (called stochastic processes) to decide pitch, duration, density, and overall structure. Though the piece is a fixed score (not free-form or improvised), its statistical design gives each performance a uniquely structured sound. Later, in 1977–1978, Xenakis created the UPIC system, which let composers draw sound waves and shapes on a screen and turn them directly into electronic music.

1980-2000 Brian Eno and the Rise of Generative Music

Brian Eno’s Ambient 1: Music for Airports was released in February 1979 (copyright sometimes listed as 1978), marking the birth of ambient music as a defined genre – music designed to be ‘as ignorable as it is interesting.’ Using tape loops, phasing, and minimalist piano and vocal textures, Eno crafted an atmospheric soundscape intended to calm airport passengers, transforming music into an environmental utility rather than a performance. The album’s slowly shifting loops created a sense of timelessness and space, influencing everything from AI-generated ambient tools to modern background playlists. Eno’s radical idea – that music could be functional, not just expressive – laid the groundwork for today’s algorithmic compositions, where machines now generate endless, evolving soundscapes in the same spirit.

Moving forward to 1995–1996, – the first commercial generative music software (Koan Pro was released in 1995; Eno’s Generative Music 1 with SSEYO Koan Software appeared in 1996). The software allows users to set rules and generate endless, non-repeating variations. Koan Pro’s music philosophy – generative, rule-based, ever-changing – prefigures modern AI apps such as Boomy’s chill presets. Whilst Eno’s analogue loops became digital algorithms, the foundational concept remains: music that evolves organically through systems rather than fixed composition.

2000-2026 AI Orchestral Compositions

AIVA (Artificial Intelligence Virtual Artist), created in 2016, became the first AI to be officially recognised as a composer by SACEM – a milestone publicly reported in 2017. Its 2018 symphonic work, Among the Stars (Symphonic Fantasy in A Minor, Op. 31), premiered by the CMG Orchestra Hollywood under conductor John Beal, arguably shows that AI systems can produce orchestral music with convincing emotional expressiveness.

AIVA composes music using deep learning and reinforcement learning, trained on thousands of classical scores including works by Bach, Mozart, Beethoven, and Tchaikovsky. Rule-based algorithms ensure AIVA’s compositions often follow classical structures such as sonata form. Although AIVA’s orchestral works demonstrate that AI can attempt to emulate human emotion, they also raise ethical questions about whether AIVA is merely a tool or a composer

Taryn Southern’s I AM AI, released in 2018, marked a groundbreaking moment as the first pop album by a solo artist co-created with artificial intelligence. The album blends Southern’s songwriting and vocals with compositions generated by tools including Amper Music, IBM Watson Beat, Google’s Magenta, and AIVA. Rather than hiding the AI’s role, Southern embraced it as a collaborative partner, using algorithms to craft melodies, harmonies, and even lyrical ideas.

The album’s futuristic pop sound proved that AI could thrive beyond classical or experimental music, entering the mainstream pop landscape. Southern’s work not only demonstrated the creative potential of human-AI collaboration but also sparked conversations about authorship, emotion, and the role of technology in art – questions that continue to define the evolving relationship between musicians and machines.

2020s: Democratisation, Lawsuits, and the Future of AI Music

The rise of AI music platforms like Suno, Udio, and Boomy has democratised music creation, allowing anyone to compose professional-quality tracks with a few clicks. But this accessibility has sparked legal battles, with major labels (UMG, Sony, Warner) suing AI platforms over unauthorised use of copyrighted training data.

As the industry grapples with authorship, ownership, and fairness, the future of AI music hinges on three key developments:

- Licensed AI tools (where artists opt into training datasets for royalties)

- New genres born from human-AI collaboration

- A potential backlash—with some artists rejecting AI in favour of handmade music

The question is no longer whether AI can compose, but how do artists share the creative – and financial – rewards?

Epilogue: The Human Need to Explore

The story of AI music isn’t really about machines replacing humans. It’s about something far older and deeper: our need to explore. From Russolo’s noise machines to AIVA’s symphonies, every leap forward in music technology has been driven by the same human impulse – to push boundaries, break rules, and discover what lies beyond the familiar.

Creativity has never been about the tools we use. It’s about the questions we ask. What if we turn noise into music? What if we let chance guide the melody? What if a machine could dream up sounds we’ve never heard? The piano didn’t replace the harpsichord; it expanded what was possible. The synthesiser didn’t kill the orchestra; it opened new worlds of sound. AI won’t replace composers – it will challenge us to redefine what creativity means.

As AI develops its own skills and ideas—regardless of human influence – will it supersede us? The answer lies not in technology, but in what it means to be human. Machines can imitate, generate, and even surprise us, but they don’t yearn. They don’t struggle. They don’t rebel against their own limitations. Creativity isn’t just about producing something new; it’s about the desire to explore, the courage to fail, and the joy of discovery. AI may one day compose music that moves us in ways we can’t yet imagine. But it will never need to be created. It won’t wake up in the middle of the night with a melody in its head, or spend years searching for a sound that doesn’t yet exist. That’s the one thing machines can’t replicate: the human hunger to go beyond. AI will challenge us—to listen deeper, think bigger, and keep exploring. Because in the end, that’s what creativity has always been about: not the tools we use, but the boundaries we break.

This article was drafted with the assistance of Le Chat Pro, an AI assistant developed by Mistral AI. The analysis, perspectives, and interpretations presented are solely those of the author.

Key sources include:

- Historical accounts of early electromechanical instruments (e.g., Thaddeus Cahill’s Telharmonium, 1906);

- Ferruccio Busoni’s Sketch for a New Aesthetic of Music (1907);

- Luigi Russolo’s The Art of Noises (1913) and the intonarumori;

- Edgard Varèse’s Poème électronique (1958) and collaborations with Le Corbusier and Iannis Xenakis;

- Christopher Strachey and Alan Turing’s early computer music experiments (1951);

- Lejaren Hiller and Leonard Isaacson’s Illiac Suite (1957);

- Delia Derbyshire’s work at the BBC Radiophonic Workshop (1963);

- Iannis Xenakis’s Achorripsis (1957) and UPIC system (1977–1978);

- Brian Eno’s Ambient 1: Music for Airports (1978/1979) and Koan Pro (1995–1996);

- AIVA’s recognition by SACEM (2017) and Among the Stars (2018);

- Taryn Southern’s I AM AI (2018);

- Legal developments regarding AI music platforms (2020s).

Leave a comment